An Introduction To Code Compilation - Tom Blue

Show transcript [en]

okay it's it's working now so um so I'm Tom um um working at Crow strike as an intelligence analyst intern but that has nothing to do with this talk I I wrote the slides about four months ago and I haven't seen them since so I'm presenting to myself as much as I'm presenting to you guys so um so my talk is on how code compilation works so let's start off by talking about why it's important to understand the way it works um I mean compilers are tools which a lot of us will have used um and it's good to understand how our tools work to be able to use them most effectively and it helps us understand

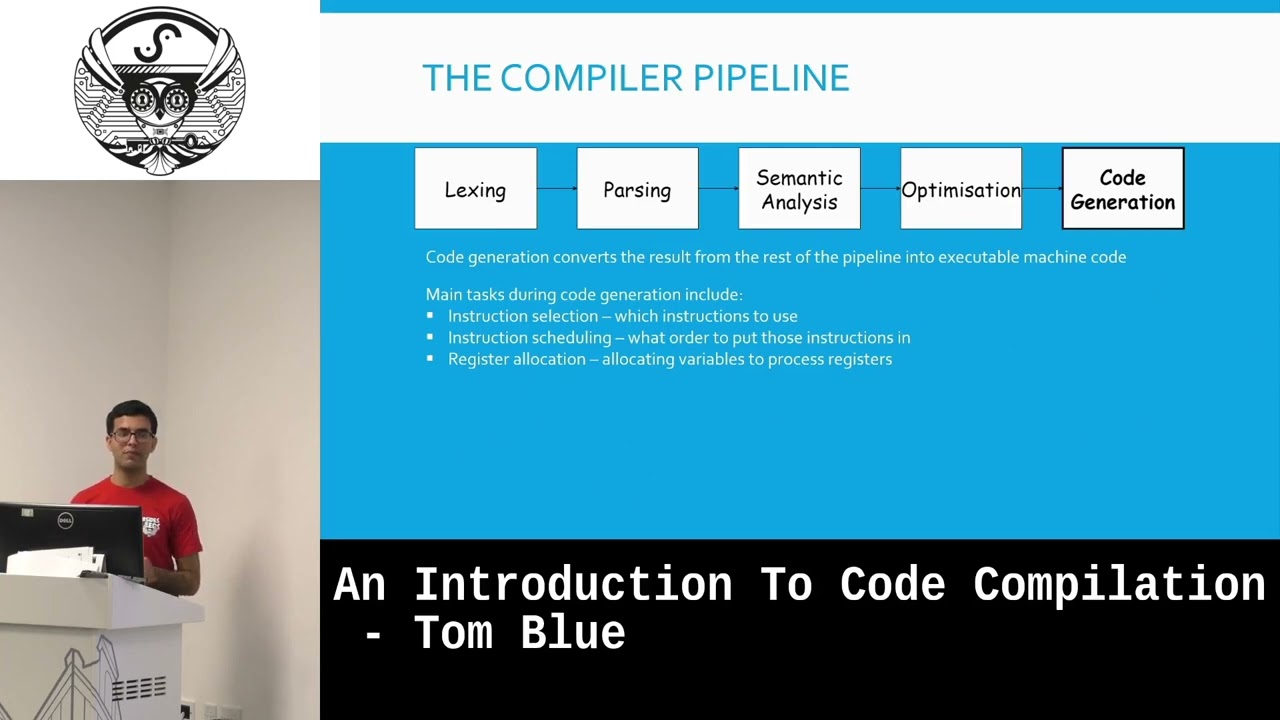

attack vectors which we otherwise wouldn't have considered um with there was a quote I was going to like say but I forgot what it is oh well um moving on so uh let's have so we're going to look at a general overview of like this is kind of a high level of how a comp pipeline might look there's Lexing passing semantic analysis optimization and code generation we'll go through each step and explain what it is and how it works so starting with Lexing Lexing effectively takes a string of characters and turns them into tokens that have meaning so let's say VAR a equals varb plus 100 you split that up character by character then you remove

the white space you group them up into Strings into sorry tokens and and then you give those tokens meanings so like v a and v b are identifiers 100 is an integer and equal and plus are symbols um those tokens are then fed into the passer the passer takes these tokens and generates what's called an abstract syntax tree the um the syntax tree effectively takes the tokens which have a bit of meaning in terms of what the um what tokens are and it kind of gives context to them so by looking at the syntax tree you can see that you perform addition on bar a plus 100 and then you perform assignment between bar a and the

result of that addition you can kind of follow the tree logically and um and work out what what lexim the tokens produced by Lexa actually mean um it also enforces structure passing is the stage where any syntax errors would be picked on because if something can't be turned into an abstract syntax Tre that means there's some mistake somewhere in the syntax of it um however PES can pick up on more um on on more complicated um issues which is where semantic analysis comes in passing enforces the structure of your code semantic analysis makes sure your code actually makes sense um semantic analysis could be its own talk it's a very very comp complex topic um

but the two bits of semantic analysis which I want to cover are type teing and name binding which are probably the most important bits uh type checking is just the process of verifying and enforcing types which is um more relevant to uh dynamically typed languages like python um and JavaScript although JavaScript is yeah so um if if we take this example code of our a equals words and we try to add 102 to it this would result in a type error and that's picked up on through type checking because uh s yeah because VAR is assigned um the type string and you can't implicitly add an integer to a string that hence the type error it also applies to uh passing

things into functions as parameters so if you try and pass 12.5 into a function that takes a character that's also going to result in a type error because because you can't pass a flow in where you where it's expecting a character and type checking also deals with type inferences so if RB is equal to the result of a function then it'll um infer what type of RB is going to be based on the return value of the function however at this stage we don't know what the function is returning um so that's where name binding comes in name binding effectively Associates code with um with identifiers so if we um It's So yeah so there's two

types of I don't remember what I actually put in the presentation so I'm kind of seeing it at the same time you are um so there's two types of name binding the static name binding and dynamic name binding static is uh static happens at compile time um it's things that the compiler knows whilst with just a source code and dynamic uh name binding takes place whilst the process is running things like Dynamic dispatch in C++ um where your where where at compile time it's impossible to work out what a function is for example like if you're loading in a dll um so if we take this example code we know from the integer return type of

function B that VB is going to be an integer so then we can perform um type checking to make sure that VB equals 5 is a valid expression because you're comparing an integer to an integer um yeah and so then moving on to optimization again optimization is a huge topic and it could also be its own talk um but there's two optimizations which make up the vast majority of performance gains when compiling code uh that's inlining and partial evaluation inlining is the process of putting functions within functions when you call a function it's quite an expensive operation for a CPU to do it will take several lines of code several instructions even to um it's to call function because you need

there's um shifting around with registers there's all kinds of things to switch context um so if let's let's take this example code um if we have um a function B which is very very simple all it does is return a AA and we reference that function in fun C so what we can do is we can get rid of function B and replace the return value in function C this increases the size of the code but it reduces the number of context switches and it reduces the number of um the number of function that have to be called which increases performance and for small function this make sense um this doesn't happen with very long

functions so the first function very very long function would not um go through inlining um you might assume that that's because it's going to make the code too big but that's not actually completely the case it can also reduce performance if inline um functions that are too large because what the CPU does is it will cach the next few instructions so that it can execute them more quickly without having to go um to memory um and if you skip past through too many lines of code let's say if varc um is false which it is in this case if it skips past the very very long function it will skip to some code which hasn't been cached yet and so then it

has go to memory to receive it to retrieve it this is called a cash Miss which um which could happen if you inline functions which are too long um there's supposed to be animations there to kind of like show what I was just talking about but I I I'm just going to Hope I explained it well enough um but yeah um the other part of um the other part of the other optimization I want to talk about is partial evaluation which is where you propagate known information so let's take this example code again we know that vars is equal to false and assuming that vars doesn't change um before funy is called we know that um funy will

never enter the very very long function it will only ever enter um the the um function B if you replace the function B with a a sorry if you replace the um if we replace the call to function B with just its code and we also get rid of the um if statement because we know that that's NE it's never going to go into the first condition I this is an extreme example but you can see how much code has been kind of reduced through inlining and partial evaluation um uh and partial evaluation also refers uh also applies to something called constant folding which is where rather than having a constant in somewhere in your code has to be

referenced constantly uh compilers will sometimes take the constant and just replace every every um every time that uh con is referenced with the value of it so if we look at this code we could replace every reference to constant a with just 533 and then that allows us to look at Funk B where we're adding an integer to a constant and we can evaluate that during compile time which is one less instruction for the comp for the CPU to do whilst in runtime hence improving performance so after all of these stages of compilation you finally get to code generation which there isn't an awful lot to talk about but there's kind of three main tasks in in code

generation there's instruction selection which is deciding which instructions to use in CIS architectures complex instruction sets and like Intel and AMD um there are many many functions that can do the same things and so the so the compiler has to decide which functions to use to do certain things there's also instruction scheduling U which is what to put those instructions in because the order of instructions can drastically change um change the performance and just as an example um over term time I work as a researcher at the University and I do embedded system security stuff um I was writing some code for an embedded system and I was writing assembly directly um it was in in C I'm not that

insane um it was in line assembly and see uh I had it I changed some instructions about um whil try effectively refactoring it and it reduced performance it it was like 800% slower um through through like a single change of swapping around instructions it it can have a bigger impact than than you'd expect and then there's also register allocation which is just deciding which registers refer to which um variables which is also quite important because um often times well often times different registers can be accessed at slightly different speeds and variables which I reference more often should be put into quicker registers to then be accessed quicker so yeah that was the entire compiler pipeline um this doesn't really

represent what a compiler looks like this so old llbm um sorry old school C compilers might look like this but modern compilers um are quite different this is what an llbm compiler might look like which is a kind of a more modern C compiler where rather than having one stage of optimization you have what's called an intermediate representation which is kind of in between your source code and the actual um machine code that's generated at the end and you can apply optimizations over and over again on that intermediate representation and that allows you to kind of achieve more optimizations than you otherwise could um if you had just um if you just had one pass of

optimization um C for example uh as another example um also uses intermediate representation they don't call it that they call it an intermediate language but I'm going to use the same term everywhere because it's basically the same thing um C uses what's called adjus in time compiler so when you execute a c program unless you specify in the compiler to compile it as machine code it will actually just output um a b executable with the Intermediate Language and the Intermediate Language um will just time compiler will compile things just in time so everything before the blue wall happens at compile time everything after happens at runtime and during runtime the uh just in time compiler will apply optimizations and go

do code generation just before ex uh just before instructions are executed this has the added advantage of um often times during run time with the context of what's happening right now you can apply optimizations which you otherwise couldn't which can sometimes lead to more efficient code often times not but sometimes if there is context um during runtime that the compiler can use it can result in more efficient um a more efficient machine code being generated and uh this is what an interpreted language like um Java or um a python might look like and um and so the way um interpreted languages work is the intermediate representation goes into what's called a virtual machine which runs at runtime and the virtual

machine can decide whether to go through code generation or go through The Interpreter The Interpreter allows the virtual machine to uh to kind of have it's much slower but it has more of an understanding of what's going on and and can therefore feed into the optimizations and um if the code goes to code um generation between the virtual machine and code generation optimization to be applied based on uh information that The Interpreter has to therefore produce more efficient code um I was briefly considering making every diagram like this but um but yeah um so um so so so we talked about how compilers work but we've not really talked about how kind of languages are

designed how the passer know knows what structure to enforce how the semantic anal how semantic analysis knows what to kind of expect and those are kind of defined in what's called a formal grammar a yeah formal grammars uh Define the structure of languages um it defines how to Lex it defines how to pass it defines semantic analysis so um so so if we take this example again from passing where we have r equal VB plus 100 uh simple formal grammar for this might look like this where we say an assignment is an integer plus an equal sign plus an expression an expression is an integer plus an uh plus sign plus an integer an expression is also an integer

and an expression can also be nothing so we can then we can then say um if we know V is an integer we have two integers um we two integers so we can turn that into an expression and then we can use the assignment to turn this into an assignment um so we've evaluated the syntax tree as being an assignment um through using this formal grammar so um so this would be kind of a more General formal grammar that would cover more Expressions this is also not what an actual formal grammar would look like but um you can kind of see how um you can apply the same Concepts to this where value can be any type of variable

um a variable can also be an expression it can be nothing and then you define what an expression is and you define what an assignment is if we were to write this properly in what's called BNF which is what formal grammars are typically written in um it would look more like this uh the reason I went through kind of a more casual look at it is just so that it's less intimidating than than hitting you with this straight away um the Epsilon in this case just means means nothing so you can say a value is equal is equal to Epsilon or an expression that means it can be equal to nothing or an expression so um as a practical example

let's look at Json in uh BNF this is the formal grammar for Json um and we have a bit of Json on the side so so we can look at um we we go as far in as possible the apple and Apple 2 are both values so we can replace those with values then um then we have a pair because we have a string L literal a colon and a value so then we can turn those into pairs then we have Pairs and te pairs so we can turn the entire thing into Pairs and then um those become objects and then with values values question mark and T values uh the array of objects becomes

values and then we apply the same idea again with the rest of the rules to turn everything into Pairs and then because we have pairs between two um object the two um curly brackets that becomes an object so we can evaluate kind of by hand adjacent expression using its formal grammar um and evaluate the object into an object um and yeah so then finally uh supply chain attacks so I kind of referenced at the beginning of the talk that understanding how compilat work can allow us to consider attack vectors which we otherwise wouldn't have um and one such a attack Vector um I'm I'm going to explain now so compilers are compiled by compilers you need a compiler to make a

compiler and compiler modifications can quite easily propagate because if you modify a compiler and that compiler is used to make another compiler you can propagate that change from the first compiler into the second and from the second to whatever else um there was a talk sorry there was a paper in 1984 um Reflections on trusting trust which came up with the idea of modifying a c compiler to include malicious code um in anything it um in anything it compiled and also propagating that change to any compilers compiled by that compiler um the idea being that you could have perfectly secure source code um you could build it in a perfectly secure environment but um if the

compiler is compiled by a malicious compiler then your code would become malicious through compilation it's it's a it's a supply chain attack and um this was actually um it sounds very theoretical but this was actually this actually happened in 2015 um the Microsoft Visual Studio C++ compiler um was making calls to Microsoft Telemetry um Library liaries which Microsoft had injected into it so even if your code wasn't sending Telemetry to Microsoft Microsoft had injected code into their compiler to send elemetry back to them um which kind of illustrates that this this um can actually happen and yeah even complete analysis of the source code won't pick up on any vulnerabilities because if you analyze a

source code that's not where the malicious code is being injected it's during compilation which is what makes this so difficult to uh is such kind of an Insidious attack Vector um there is also one remediation I want to discuss about this which is diverse double compilation um it was discussed in in Willow's PhD dissertation and it's basically the idea of using a trusted compiler within the same family of compilers so uh compilers come in kind of families where if you change one compiler to make it more efficient or whatever that's a new compiler but it's in the same family as the old compiler um if you have one known good compiler within a family you can

um you can test the output of the new compiler versus the old compiler um and I I I don't exactly remember the formal proof but there was a proof that you can show there's even with optimization changes you can show that the two pieces of code that were generated are identical in function the idea being that if you have a non good compiler you can then verify an unknown compiler to make sure that that's also not had uh not injecting malicious code into your into the code you're compiling with it um yeah so let's see sorry for the complete um and this is the first time I've seen the presentation in four months so I any questions any questions

yeah so I guess a modern format of the attack is kind of written up by this loson talking about using by directional unal characters in streams that um BS um how they're part into systems so I'm thinking about AI way of feing in characters and you actually if you don't have appropriate validation you end up with all sorts of messes that end I guess I'm just trying to Fig that where such kind of attacks would happen I work in low level ined systems for crypto modules I do worry about this sort of thing um we also worry about um things like smart contracts solidity where I can't form veryy compiler so I don't know it's just an

observation and com know what your are um so what exactly is that you want me to comment so with the I'm just wondering modern applications for such comp for such compiler attacks I mean um I'm not very familiar in how smart contracts are created but from my understanding there is some kind of compilation step um I suppose given how Niche smart contracts are it could be possible to create a compiler which will add some kind of factor which allows you to break the smart contract without the person who's writing it being um aware and from my understanding smart contracts are generally when they're audited they're they're auditing the smart contract itself not the compiled kind of output of it and so

that would pass audits without anyone really noticing um I think that's probably probably quite possible um attack Vector in that context yeah would another type of remediation be maybe automated testing that tests the comp so it depends on what kind of malicious behavior that um I think the only issue with that would be you don't really know what the compiler might be injecting into your code if what types of things are yeah like if you have some idea of what malicious code might be injected you could test for that but if it's just a case of malicious code might be injected you don't know what that is that wouldn't really work unless you was typically done and then yeah

yeah that yeah well thank you very much