Containing the cloud

Show transcript [en]

alright so for our after lunch talk I know you guys have full bellies but thank you for coming back here this will be Wes talking about Container security in containing the cloud thank you very much guys alright now is when we need the applause track all right thanks guys for coming after lunch like I was saying I'm not sure whether this was delivered or not but I'll take it as a compliment so container security and one of the things that I want to put on here I've delivered this talk a couple of times but now I'm starting to move more towards the engineering view of containers a little bit of background I'm a cloud engineer at CrowdStrike

basically a data janitor and kind of obligated to say we're hiring so that's who lets me come out to conferences like this and talk to you fine people so if you're interested if you want to do big data in the security space as a vendor let me know hit me up a little bit of background to this talk earlier this year CrowdStrike was deemed FedRAMP ready and then even more recently we are fully authorized to work in the federal space and what that means for you not just Tooting our own horn is that we used a lot of docker ization and containers to do that and that includes a lot of auditing a lot of like really

like fine scrutiny of what's going on in in a very low level and that's actually the genesis for this talk because we wanted to we wanted to know what would it look like if our environment got compromised and since we're a security provider for federal customers they really want to know that too so that that's kind of what drove all this some additional context is that we're using kubernetes in Amazon's gov cloud region for a security application for customers and it's for a lot of security conscious organizations so they want to know all the way down to like what would an attack profile look like all that now the bearing that that has on most other

companies is that there's a lot of there's polling that shows that the primary reason that companies want to move to containers but they're concerned about what the security posture would look like because containers kind of be or kind of seem to be black boxes and I'm coming at that can the question of container security more from a systems engineer perspective we do about a 200 billion events per day and that's like 16 terabytes and every six hours or something like that an enormous amount of data so that will come into play later but performance and security aren't both of those have to be considered at the same time at a really high volume so first I think it's

helpful to step back and ask what are containers because not everybody has had experience with containers how many of you guys have worked with containers this guy is how many of you are using containers in production you are you using an orchestration utility for kubernetes okay a lot of different orchestration flavors oh yeah yeah so that's so right my so that reminds me of my introduction to containers it was working with a company where we had a web app and I was on call and I got tired of being paged because the app would just fall over it was a Scala web application and I really didn't care what the exception was some engineer wrote something that didn't deserialize

properly whatever didn't care wrapped it in a container and deployed that just on the host itself with a restart always flag and that was the beginning of my docker experience generally what happens is so containers are kind of an overloaded term here there's iOS Android OS 10 Windows there's there's all kinds of containers what I'm going to talk about is containers as application deployment so the vhost anybody ever seen this xkcd comic I don't know that you can actually read it there but their key point is most people are introduced to containers from the sense of I want to go play with this application let me go look on docker hub pull something down it's running real

fast and I could just work with it it's almost like magic now there's pros and cons to that the pro is you can get working on it really easy the con is could be anybody's code it could be anything in there so software development lifecycle the simplest way to deploy an application is just a binary just push that over so Facebook had the famous like my two gig binary that they pushed out that had their entire app code I don't know if it's still the same but generally you want to rely on other pieces of software other libraries and this is where it starts getting hairy so then we need to track down all of these deploy or all

these dependencies to make our applications repeatable in each environment and the problem is once I start depending on other applications then I'm not really sure what I have to track their versions I have to trust them to make sure that they're actually using Cimber properly that they're actually not overwriting versions with newer versions or anything like that so there's a peculiarities and they they're calls for a really complex deployment system so containerization from the docker sense is I want to freeze-dry everything and deploy it all together all the dependencies system dependencies everything so that I know exactly when I go to deploy an image in integ and it passes all my checks that exact same

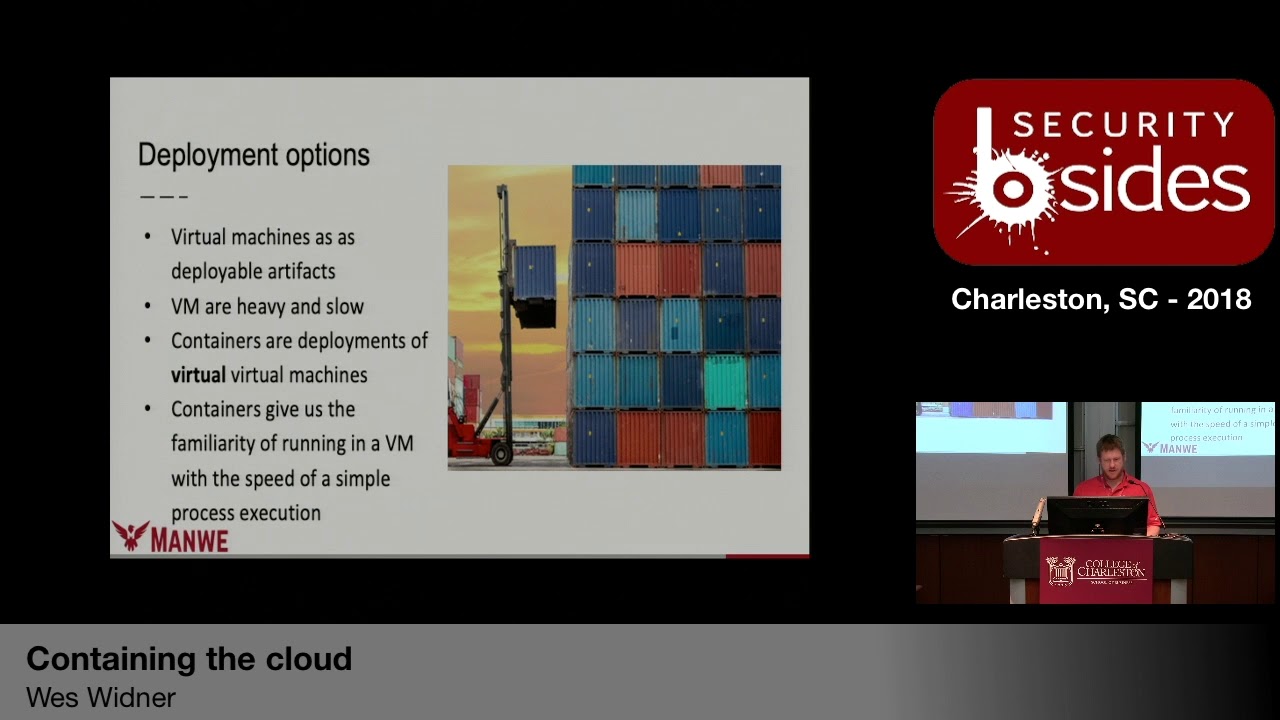

code is going to be running in production there's no there's no weirdness that goes on with a library getting swapped out or something like that so some of our other deployment options besides containers are virtual machines and we could deploy a an artifact like an entire machine as an image and this is basically where the cloud 1.0 took off right where we we started baking everything into Ami's or virtual machines with VMware or something like that the problem though is they're really heavy and they're slow and their sub containers or deployments of virtual virtual machines in other words they don't virtualize all the hardware it's just from the kernel down so the libraries in fact running a container is

just running a process with a few caveats to it so one of the interesting things here is that the attack surface if you're deploying an application on bare metal it's pretty much the most secure because it's got its own dedicated hardware everything VM architecture is more secure than container architecture you can kind of see here why the there's no hypervisor between the container and the host OS this is one thing that's like really needs to be brought out in understanding containers you lose some security because the hypervisor provides a lot of security so here's another here's another example there's been 12 VM escapes up until 2017 when I pulled this stuff and a lot of these are for really

silly things like floppy disk controllers and and others like VGA drivers things that you shouldn't have installed in your VM anyway right by comparison the attack surface for the container is the kernel the kernel has a lot more vulnerabilities to it like almost 2000 vulnerabilities up to 2017 now that means we've got a lot wider of an attack surface here so containers are separated by C groups and namespaces it's really just a convenience function it's not for security containers kind of have a connotation that they're going to contain the application but they don't unless it's some bsd jails which was a similar concept and executed probably with a lot more security in mind containers on linux are really just i

want to treat this group of processes as one unit so that i can track all of their memory consumption CPU consumption all of that in its own like virtual namespace and then I can apply things to it like reen icing an entire group of processes at a time rather than hunting down all the dependencies so another issue with containers is when you freeze dry your entire application in all the libraries you freeze dry all of the vulnerabilities in those libraries as well so if you're running a Python application and it pulled in those flasks I think flask kind of vulnerability recently then you have that vulnerability and all every time you go to deploy that app that that image it's

always going to have that vulnerability baked in so you need to scan track and scan both the base image as well as the application libraries I would argue that container security starts with creating the container itself it starts so the way that a container and I use container and image interchangeably that's that's my problem but technically you're creating an image and the image that you create is built up by layers specifically there's a copy-on-write system so every operation from your docker file every operation is creating a new layer and not entirely but it is so your command when you're running things when you're copying things into your your image they're creating a bunch of new layers and what docker will do is

at the end of the docker file it'll squash those down if it can and here's the layer of that image ideally as a software engineer my application code would just be an update to my application would be one layer change so what we can do is we can have a common base layer that all of our applications use and they have things like common users common libraries common all that other stuff and that'll be important later as we get into how that fits into the software development lifecycle so security from the from base image and docker allows multiples multistage build so you can actually pull in lots of different images and poopies parts of pieces out of them but the very last

from is where your image is going run so one thing we need to do is is make sure that our from image is trusted so there was a forget the company but they did a analysis of all the all the base images on docker hub and a lot of them had a lot of images have vulnerabilities but the trusted official versions don't so there's been continuous active scanning of the official base images on docker hub and then there's a lot of other places that that do that so pay close attention to who you're pulling the from image from docker used to have this thing where anybody could just push the binaries up there and pull them down since then

they've gotten to where it has a star if it's an automated build and you can go inspect the docker file that it was built from and and all this other stuff best practices pull the the docker file that the person provided builder to build it yourself on premise that way you know that there's nothing in there another thing with docker containers that the IDs for the containers used to be used to be arbitrary now they're the sha-256 of the contents of that image very similar almost exactly similar to get to the git hashes when you're making new commits that means that you have cryptographic certainty of what's in the image given knowing the yeah if I I know

that if my hash matches over here then the contents are going to be exactly byte for byte the same over here one thing to point out too is that in docker containers everything that you write in your docker file shows up in the history of that container so one of the things we did at CrowdStrike is we released our the first machine learning antivirus to virustotal and this was one of our first docker efforts and this was an interesting case because we were going to take that image and we were gonna give it to virustotal and while we liked the guys at virustotal we don't trust them like like anybody shouldn't trust the other person

that you're handing stuff to so what we would do is we would take our image export it and re-import it to get rid of all the history all of the the get history even with that one thing that we that we really have to pay attention to is not putting our secrets in our build scripts because that output shows up in logs and it also may in intentionally get freeze-dried into the image this is something that bit I think it was get or get lab one of those companies recently they they actually had their secrets baked into the history of the images that they were building so this this stuff happens one thing that I like to

do is to use labels in the image and then put like the get hash that it was built from the user that did it like all kinds of auditing information that I just like to keep in there so that if some of that something breaks then I can look and see what that meta information is and kind of be able to track it back to something last thing is the Builder pattern or multistage pattern there's a temptation at least in the early days of docker to take your image build whatever you were going to build in that image and then leave all of your build tools in the image the idea here is if we use

different stages or different one image to build our application another image for the final release of it we don't we make it a lot harder for attackers to live off the land one of the one of the core engineers at Red Hat has this great talk that's basically containers don't contain if there's nothing else that you take from this understand that containers aren't inherently secure so route things like route in a container doesn't map to route on the host but it's still problematic they're still and the problem there is file ownership the UID in the container and the UID outside the container don't match but it it's gonna pick some arbitrary value so for that file ownership between guest and

host is really terrible so if you if you have to do it do it as read-only I would say that you should architect your applications to not use it at all and also yeah a tight coupling between host and the guest is a code smell so try to make it to where your application reaches out to some like s3 bucket or something like that for configuration information so containers do provide a minimal protection to the host operating system they're not architected to be secure but they do drop some privileges which we'll get to in a second so here's some quick wins that we can get we could disable 32-bit compatibility mode in the kernel and

that cuts our attack surface in half if you go back and if we look at some of the kernel vulnerabilities a lot of it is because in 32 or a lot of the vulnerabilities in the kernel are from 32-bit mode drivers or system calls that are just neglected and a lot of the exploits like the the very last session in here one of the rhop exploits that I found was 32-bit forcing it into 32-bit mode because that's often neglected so if we cut that if we turn that off in the kernel then we cut our attack surface in half and if we mark our container as read-only then we make it a lot harder for for any attacker to live

off the land not impossible but a lot harder because then they can't like pop a shell in the container and just start downloading tools and using that so another tactic that we use is recycle containers often this makes it even harder to live off the land because if we're recycling containers we can also recycle certificates that those containers are using and we can make it we make it a moving target at that point it's all repeated the route think also repeated some of the others oh the last two points here I guess I needed to make those a lot darker there's a concept that's emerging in the container world of applying micro-service architecture to the application so

containers really fit well with a segmented application that does lots of different things like reading like you break up a distributed application and each little component is its own service and they all work together the the containerization or applying that to container security is grouping those functions not just by what they do but by what access they need to do what they're doing that makes sense so if an application needs to read from and like most of them need to read from a network socket but like reading a file or writing a file or doing like device operations or something like that if we can turn off the kernel capabilities on this container over here then we can

like really shrink that application what it needs its capabilities really far down so we're applying capability based security to the container or to the to our application architecture what that would look like is as you go to architect your application not only are you asking what what component needs to be split out so that it can be scaled independently but also what is that component doing and one thing that you can ask as an architect is how much how many what's the diversity of the the different system calls that this application needs and can I cut that down especially system calls that are very risky like does it need lower-level privileges to do like a ping or a

something else from the container can I abstract that away can I do health checks in a different container and so that that container has its own set of privileges you know can I cut out file access altogether does my application just not need to read files could I have some other container that in charge of reading files and it just provides like a network connection to another to a set of other containers so that we don't have every container reading off the disk stuff like that I believe our eye view containers as a unique opportunity so security is usually bolted on after the fact or traditionally it has been bolted on after the fact for for applications but

as we get more and more into containerization we can move security into our software development lifecycle was one of my favorite quotes it's on the healthcare gov tech surge do y'all remember healthcare.gov when it was first launched remember what happened it fell over so they went and they went or they went and they asked several tech Titans to weigh in on what they would do and Bill Higgins has a great talk on this his suggestion was to tell President Obama that the team should deploy to production every day and the reason for that is that it smokes out a lot of bad practices in your development if your application is really cumbersome and you have to do all these things

so at CrowdStrike when we went to go wrap our cloud up and put it into another place we learned a lot about shortcuts that have been taken so we had to go back and fix those those are just good things to fix in general this is part application security going back making your application configurable making sure that you don't assume things about your environment writing your application so that it reaches out and asks the environment where it's at so a great example recently is that we're we were passing in like region information for AWS now we just go and ask what region this thing is in and then use that instead of having it configurable

so there's a lot of great practices that come from trying to shorten that development or deployment cycle so I would say continuous deployment that's all facilitating continuous security if the way to move security forward is with the DevOps tooling how many of you guys are familiar with DevOps continuous integration continuous deployment so one of the one of the promises of containerization is that we can wrap up lots of different tools and put those in different pieces of the pipeline and I'll show you guys how to do that in a second and all of these are their own individual tools and if we can make our deployment pipeline pluggable plug and play then we can add security tooling as

we go along transparent to the developer but also in a way that we can tell the developer like how to do things in the best possible way so that it's not like after the fact where you're doing a pin test or an audit and somebody comes along and they're like you know the way that you were writing your application all along was really awful and then you have to rip all these things out if it's baked into the the deployment pipeline you get immediate feedback of whether you're doing good things or not for example one thing that I've been really excited about recently is using various linting tools so there's linting for docker docker files themselves

there's also linting for the final outcome of the image you can lint through that and see what's going on in the image like what your startup scripts look like things like that the bash that you would write in your docker file that can be lint 'add everything can be lint 'add and one of my one of my friends chris sanders has this great talk on security and nudges as it's interesting like psychology applied to security practices in fact there's a book nudge which is about setting defaults so as security engineers or as security professionals if we add good linting tools to the development pipeline we can help developers write more secure code not by coming after the fact and being a

nuisance but helping out like we can write our linting tools to say you probably should go ahead and close this file or write the closed handle here you know as you're as you're writing everything so what that looks like is as codes checked in to get it goes through the build pipeline and then all of our linting and everything is done there and then through deployment that's not really useful slide so automated security tooling in 2017 24 percent of images and public repository had high vulnerabilities and yeah this goes back to the freeze-drying ok trusted registries docker has a trusted registry google does to where they continually scan images at check-in time and they also scan it at rest

because an image that's in the registry could develop a vulnerability or one of the libraries is known to have a vulnerability later so like I was saying earlier your image has a hash all the layers under it have their own hash and so if you're continually scanning it or scanning your images you can say this layer has a vulnerable version of some Python library and then you can go and say every image that has that common image in it is also vulnerable so I encourage you to look at things like go harbor I oh that's a registry system that includes trusted registry it also includes continuous scanning before you promote the image to another registry

and then you can also blacklist images pull those back out of the registry and say this image can't be deployed because it's no longer trusted because we know that there's a vulnerable passionate so all of this is before we ever run the image this is just building the image how we constructed how we think about it how we pull out our kernel like syscalls how we limit just the image that's running to where it's just our code the next thing that we can do is we can protect our applications as they're running so docker drops a number of of permissions or capabilities by default so and there's a great doc on that but it drops like not file based

permissions but it drops a lot of like other route and extended permissions so there's two profiles that we can use app armor or essay links and one of the problems with this is that neither one of them is blessed by the container native compute foundation and also one of them was recently public attacked publicly attacked by the creator of Linux himself or creator of Linux Linus he went after the app armour guys Google has G visor which if you remember back to the slide on what the applications depend on G visor basically adds in an artificial hypervisor into the container ecosystem I've heard rumor that there's going to be other attempts at a hypervisor shim that allows for you

to set policies at the hypervisor level so givi until then G visors is an option I think it's headed in the right direction another thing that you can do is trace your application and there are tools to do this trace your application as it's running to see what syscalls it's actually using and then you can create either an app R or s a Linux or just drop capabilities that your app doesn't need that makes it even harder for someone to compromise your container because even if they popped a shell in the container which they shouldn't because you're not baking in a shell in your container in the first place but even if they were to get something

running in your container they're not able to do something outside of what that container normally does such as reading a network socket directly or something like that so that kind of ties into the drop dropping capabilities as early as possible so one thing I mentioned it before turning continuous integration into continuous security so the new hotness is to use docker to build docker to build her on kubernetes so docker on docker using docker like this entire turtles all the way down so the way that this works is use a jenkins file how many of you guys use jenkins anybody anybody use bamboo how many of you are familiar with jenkins okay so jenkins just basically a tool

for running something like with multiple steps like here's my recipe or whatever so using a jenkins file what you're doing is following the practice of treating your configuration or your how you build your application as another layer of code and this is where a lot of what you were mentioning earlier you've got a lot of a diverse ecosystem of containers it's probably because a lot of companies start this way that that you um you don't properly respect the fact that you're creating code all the configuration is code itself right and so then there's there's there's usually got to be this big push to say no let's split this stuff out into its own repository and treat it as code and one

of those things that needs to be treated as code is the jenkins configuration itself so but a big proponent now of of moving things into jenkins files which can be tracked as code rather than clicking into the jenkins master and writing culture steps there in the script field the one thing we can do with trigger with jenkins files is set up a staged docker build each one of these stages can invoke a docker image as a build agent that means docker files that means your build is now docker eyes and can itself be swapped out once you find vulnerable things or you want to upgrade something this is where your security tooling fits in security

tolling ends up being a stage so linting ends up being a stage and you can have as much linting as you want in there well it's not exactly free but it's mostly free and then as a security engineer you can come in and write your security pieces either a pre linting or post linting check and if it fails it fails the entire build right and then you can also write your tool to be really nice to tell the to tell the developer why it failed and that's what you should do is a nice security person not just leaving them hanging we've had great success with us across the company so that rather than telling every team know you

have to develop it our way go do it however you want but it's going to go through our checks and they're gonna be mandatory checks and we'll provide you with feedback and eventually we'll get into this secure state it goes back into so the whole thing about continuous integration is as the as the plane is flying we're swapping the engine out right which is a scary prospect if you haven't built for it going back to the quote from healthcare.gov we're continuously moving and hopefully improving so automated security tooling we covered all that earlier but I really like that image basically x-ray into the container mention that earlier to the OCI spec doesn't include coding it does

include the file layout and the runtime spec it doesn't specify a security model so that app armor and SELinux profile it's not included in the open container initiative that's because they haven't figured out which one they're gonna go with or either I have a feeling it's not going to be either and the reason for that so the the the bodies or the standardization bodies that you should follow our content is a cloud native compute foundation and then the open container initiative open container initiative will allows for either run well run see I think is their official running a container docker is the one everybody knows because you start with it something that you can get running on

your system really fast but the docker found nation is not something that a lot of companies really like being tied to so the OCI was put together and it has docker as a member but it also has Red Hat and core OS as members and Google and and all these other places and that's why I say there spec doesn't specify a security model now that's going to be changing really soon but until then just keep in mind that whichever one you choose it may or may not be supported so what we've done instead of doing a security model is dropping capabilities and monitoring our containers monitoring the event stream out of our containers so I've mentioned

a container orchestration system one of the things that that containers have really done for us or that they've kind of lend themselves to how many of you have ever heard of Jevons paradox Jevons paradox comes from the 18th 1800s and it was a view of using coal so the the paradox was the more coal that was available the more people used it's kind of similar to electricity today the more there's available the more uses we find for it same thing with containers the easier it is to use containers the more you container eyes everything so orchestration systems are what grew up around containers to move containers around to start them up to figure out scheduling so what you have now is the

concept of a meta operating system across multiple computers the clear winner of that right now is kubernetes and we're not going to delve too much into kubernetes because that's a whole nother ball of wax might be a next talk but the orchestration systems are very opinionated and it's worth reading what the opinions are of the people who've put these systems together one of the opinions is software-defined net which are really interesting for example Google treats their data centers as one black box compute resource inside of that data center ad hoc networks are created using the container orchestration systems to backup a little bit more a container is not just a process but it also has injected in it a

network driver and so it looks to the developer as if you're getting an entire VM all of your own and that's really attractive for for software engineers and because you're getting a network driver injected that network driver could be anything and so that's where the software-defined networks have come in so it allows us to create software-defined data centers which are kind of scary and also promising at the same time because what this means is I can shrink my application down to where I have this group of containers that all talk to each other but don't talk to any other containers orchestration systems default to just work so kubernetes does not include any opinions on security out

of the box you have to add that yourself and there's a lot of places where you can going back to the nudg principle they want it to just work so that people will adopt it but we haven't we haven't started nudging people to use more secure practices another example of this is running your own registry your own docker registry doesn't include security by default but there's a lot of options there to allow like LDAP access and all that and I neglected to mention earlier that your registry system should be separated allow developers to push to their own repos to their own names but don't allow them to push to the the production repo gate that by Jenkins or

some other build server so one thing to point out is that because orchestration systems don't have security on by default it means that any node can talk to any other node in the system by default and that's something to really pay attention to especially on on all the worker nodes if you have a rogue container in there and you haven't segmented access deliberately segmented access then one bad container could just spew or talk to anything else one thing so I really want to get this term out there as far as container security bulk heading defense and depth if we're gonna treat our container systems with the same depth of security then we need to think about how

we can assume that one of our containers is compromised and then how do we keep other containers from being also compromised and that's part of the kernel capabilities dropping that easy or dropping that early and a lot of its monitoring too so tagging is the way forward rather than so in in times past we had one computer one VM and we knew what it did and we used it by name and stuff like that going forward we're gonna have containers and and how many of you have heard the term pets versus cattle when it comes to containers well there's a third term which has come out more recently which is insects containers as insects doing one function

only one function and being ephemeral and when you have that you can't really you can't really log and monitor things in the way that you used to do the best way to do it and Google does this really well is by tagging so this tag can talk to this other tag but it can't talk to a different tag it requires us to think of components as discrete logical units and it also allows us to think like in terms of like really granular this thing can talk this thing on this port but it can't reach back out or something like that yeah containers are designed to be in motion so if you have a container environment and you have a container

that's been up for like a hundred days or something like that that's bad you need to have something that goes out there and harvest those containers because a lot of things could change we've run into this in our cloud where environment variables change and they haven't been reapplied to the new containers because the new containers haven't come up so that when somebody does kick a container it gets a bad value and it can't come all the way up continuously refreshing catches that early and real quick right before we end this is another rabbit hole that we could go down for a long time EB pf' I would argue is the future of not just so EBP F extended Berkley

packet filters you've used them if you've used IP IP filter yeah IP filter then you've used D BPF you you don't know it but that's what's going on behind the scenes so extended Berkeley packet filters is an ad hoc measurement and limited control in kernel space this is basically byte code that gets loaded into the kernel and the kernel executes sit in kernel mode that then operates on one stream of events either syscalls or network the first target was network so it's effectively dtrace for linux and this has been slowly piecemealed out into the linux kernel for the last year so and the interesting thing is it hasn't gotten as much notoriety as it should this is useful so I'll skip to

the end there it's useful for evaluating both performance and security and I think this is really fascinating because with containers performance and security are wedded together down at the syscall level down at the how you measure before how you measure like what the containers doing and how it's doing it that means that you're if you're selling this up the chain you can say paying attention to our containers will make them both faster and more secure like adding all that stuff into the like taking care of linting and all that because one of the questions that you'll get asked is why are we adding so many layers of indirection have you been asked that wreath not directly but

that is like one of the things that I mean containers carry their own complexity and it multiplies as you keep on going so this is one of the promises or one of the things that you can use to to show how how the you get better performance you get better security out of it if you go down that path anyway so summary securing the cloud comes to two questions to ask ourselves what's running in our containers or even what's loaded in our containers what's available and then how can we be sure our containers are behaving normally and that goes to the monitoring part so I I glossed over a whole bunch of tools and a whole bunch of utilities I highly

encourage you to take a look at the awesome list that I put together I try to keep this reasonably up-to-date it contains references to tools like Claire that are able to scan all the linting tools that I was talking about all of that gets put in here how to do a pipeline build with Jenkins and use docker containers as linters and all these other things so with that there any questions any comments

yeah so we use a lot of go and one of our one of our systems that we use so we use go metal enter and had oh lint had a lint is what we use to it's the dockerfile and inline bash linting solution and that's a stage and then another stage is our go metal enter for our go code base because we use a lot of go are you talking about an example of the actual Jenkins file

hmm

so yeah so for that we use see there was the go harbour go harbour is what we use to do analysis of the image itself and what's in the image and then that also scans it reefs cans it when it goes to promote to another environment so alright anybody else if not thank you guys for coming and hope you have a good rest of the day [Applause]