BSidesATL 2020 - Protect: Expose Yourself Without Insecurity: Cloud Breach Patterns

Show transcript [en]

all right it is one o'clock in Atlanta and we are ready to get going so real quick I'd like to thank all of our sponsors who have been with us through this transition to a virtual conference they've been super supportive at Diamond level we have Warner Media gold-level Kennesaw State University Kohl's college and the KSU Department of Information Systems Bishop Fox coal fire genuine parts company and NCR and crystal level we've got critical critical paths and synopsis Silver level errands binary defense Black Hills Cora light and guide point security bronze level we have NCC group and our in-kind sponsors are EC Council for online training and secure code warrior for the virtual CTF we'd also like to thank

crosshair Information Technology Joe gray offensive security and pin tester lab for their contributions to our raffle prizes make sure to go to the raffle giveaways channel and get signed up for those there's some really great prizes if you haven't done it already and we'd like you to drop a pin on our map just so we can get an idea of where everybody's coming from I'll post a link to that in the track protect channel in a minute and yeah without further ado I will pass it off to Oscar and Brandon hey everybody thanks Patrick for the introduction and thank you besides for having us it's been an interesting transition definitely going to everything virtual lately so glad to be

here make sure that first we can see my screen there we go can everybody symmetry yes perfect alright so welcome today expose yourself without consider talk so during this talk we're trying to answer the question how do you determine what your exposure is on on cloud infrastructure like what is your publicly facing external infrastructure for the cloud accounts within your organization my name is Oscar Salazar the principal researcher at Bishop Fox this is Brandon Gaudet he is also working on the continuous attack surface testing team mr. Fox and we've been working on techniques and procedures and tools to help improve that process and improve that system yeah and so before we kind of go into the cloud and what

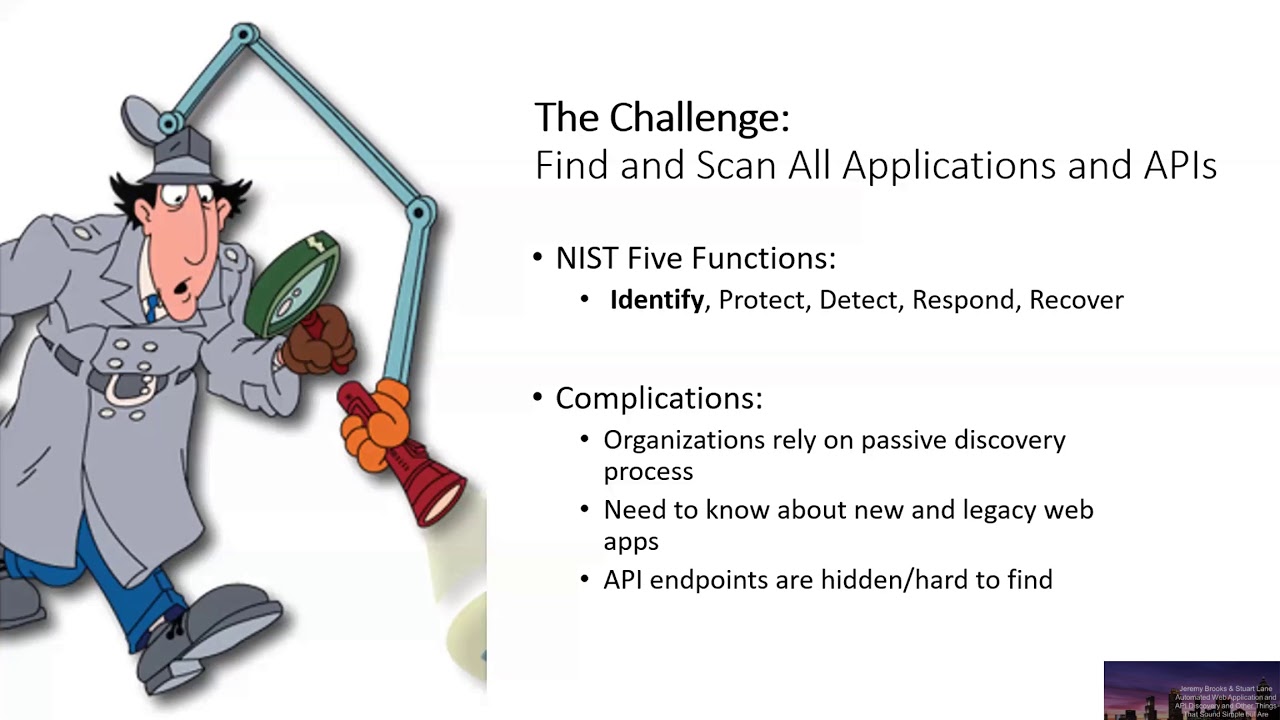

your cloud exposes on the public Internet and the first thing you have to do is define what is an asset inventory and what is it X what is the success criteria to define that you your asset inventory is what you want it to be units doing what you need it to do so during our work we've kind of stepped down and took a step back and looked at this and kind of what do we want out of it what is it gonna do for us and what does it need to have in order to do that and those kind of break down into your some of your standards and some new approaches that we're taking so the

first one is just that it is complete and accurate you don't want to be tracking assets that you no longer own because you could waste your time tracking down vulnerabilities or different types of problems and you also it's only as good as how accurate is because you only need to miss one asset on one subsidiary that has a critical vulnerability in order for you to feel like your asset inventory failed you or wasn't good enough in doing what you wanted it to do because you essentially weren't tracking something that was critical to your company's infrastructure so then once you have this base of accurate inventory kind of like what is the next step and for us it was kind of take a different

approach than kind of the old-school approaches you do a pen test once a year and you kind of redefine your assets every still X months depending on your company and organization into having a continuously updated set of assets at any point in time if I have a question that I want to know about my exposure to the Internet for my organization I want to be look go to this asset inventory and just be able to ask it so that means you have to update that inventory at a quicker time period it can't be every year you should try to get it down to one plate or weekly or even daily in some cases so depending on your

organization or your kind of your level of security you kind of want to reduce that time to as low as possible so that anytime you do have a question you can go and ask it and then kind of the last big thing was the thing I want to use my asset inventory for is to ask questions about it so we kind of need to improve the data we don't want just a list of assets or like a list of domain IPS insider ranges when I have a question about my exposure on the internet or what assets do I own I mostly want to be able to answer a specific question as in hey do I have this type of asset on my

network and just having a domain moster IP address kind of isn't enough to answer those questions so we want to move the data that is in our inventory assets to something that's more actionable don't just have domains or IPS or cider ranges kind of scan those and see what ports are open or what services are running on them or any other kind of data what is what do they resolve to what name servers they're using in order to kind of have a better picture of what that inventory actually means and then be able to action on it and have the data that's required to action so kind of breaking those three thing down if you can do all of them and

kind of doing them continuously and over time and always be able to ask them a specific question socially that would define a very successful inventory that if I have a question I can go and ask it I can get an answer and then I know that what I'm asking it a question that I'm the correct answer that it's accurate and for our perspective we focus we tend to focus on external attack surfaces which ends up being like your targets right so it's arguing would be a scheme host port combination and IP address and a way to interact with it that's really what we're trying to target here so yeah so the way that we tackle this at Bishop

Fox is that we try to start with building a baseline in order to build a good baseline we take a couple different approaches the first thing that we want to try to do is identify a brand right what is your organization's brand what are all of the things associated with your organization and start moving from there we want to evaluate what your brand has what organization structure what's the org structure within this company what business units do they have do they have any specific products that they that they service or sell and/or do they are they large enough to have like a mergers and acquisitions be part of that infrastructure the within those business units and different portions of

the organization we try to start tracking different types of assets so front-yard their primary domains any sub domains associated with those domains your IP address space like a site arrange active services that they may host or may may have as part of their infrastructure and any a SMS that would be associated with them and in addition to that making sure that again we're continuously updating that data with new dns information new records as they come up making sure that the domains that we're tracking are up-to-date as we're going to organizations purchase new ones or as they relinquish the old ones that they're no longer using and making that we have a accurate set of cider

ranges so that when we're discussing what your attack surface is we know the correct set of my piece as a SN will change to change over time so yeah as Oscar stated we do this at a bishop box right now is a completely external perspective but there's kind of a second way of doing this and that's kind of where this presentation is gonna be pushing trying to push towards going towards and that is from a defensive standpoint you have access to more data than you do from an external ax standpoint you have access to master records for DNS you have access directly to your cloud accounts which we'll get into a lot more detail later so you have

a different view on your network from the inside than you do the outside which gives you once again access to more data and a different way to try to find these domains and a different understanding of how the organization works in order to do it so listed below is a couple examples already named some DNS records domain registrar's scanning stuff just your internal IT team will know about the organization that it's not might not necessarily be public just like proprietary information are just kind of common knowledge within an organization is also very different than what you know is an external and all of this can be used to find domains that are different than you would find on an

on an external attack surface so you should be using both this is the kind of the second approach of doing that so you're gonna start talking about kind of the methodology that we think makes the most sense when you're trying to create a complete inventory of your externally accessible targets you got to look at it from from both sides right from the inside looking out and from the outside looking in for the inside looking out kind of like we just discussed you have access to master records you have access to basically the source of truth for the information right if I'm trying to identify all the subdomains on on a domain that belongs to your organization

from an external perspective I have a limited set of resources and I have to essentially get at what subdomains maybe and try to find records from an internal perspective you have access to your your DNS zones and you have access to all the subdomains that are in fact there so you have a more complete view from the outside on the flip side though from it as an internal as someone looking from the inside you only know what you know right so you have access to the records you can query the systems that you know about you can pull that information but shadow IT exists in many organizations and we've seen companies customers tell us well we have no AWS infrastructure

for example and then during the assessment we break into their AWS infrastructure and so it turns out that somebody at the organization and a higher-up had created an account and we were able to correlate that data and find they're essentially shadow IT right so you can only protect what you know about and that's why we we also want to look at it from the outside in right Red Team Red Team errs and and Osen techniques can be used to help identify assets that you own that you are unaware of yes this is kind of an example what we didn't want on our customers we took the outside an approach well gathering asking our customer to hand us all of

the inside out approach so in this case they handed us forty three hundred and eighty one domains that they knew about from an inside perspective and then we did the outside predictive scan of doing a full deep dive into the organization its acquisitions and subsidiaries doing our own identification and we ended up with eleven thousand one hundred ninety one domains and under those some might not have been there so we had 79 roughly eight thousand high confidence so what we mean by confidence here is those domains are correlated with multiple data points that belong to the company so it could be like they have their name server and their screen shot looks like them or something

they essentially have more than one data point that makes us believe that it belongs to the company where we have some medium confidence domains there's just over three thousand that just kind of have one data point might be Whois data might be something else where it could be somebody else just trying to act like them or it might just not be them anymore but there still looks like them it just might not be then and then we would combine this data you kind of get that we from an external perspective only missed 24 domains well they end up missing from an internal perspective 11,000 domains but how many domains here is not an necessarily important kind of what to

pull out of this slide and what matters is that neither approach was good enough to find all of the domains so we ended up missing 24 domains and while digging into those domains we realized they had things like protected privacy Whois data and did have data directly correlated to the company or any of these acquisitions so those type of domains are gonna be almost impossible to find from an external perspective because they'd have nothing that's tying them to the company but from an internal perspective they had records showing that they owned it and they host it or that they control what it's doing which is not something that you'll ever find from the external where for this organization they had a

lot of the shadow IT or the security team had acquisitions that they didn't know about where when we looked at it from an external perspective their security team essentially the org itself was behind because this company acquires a lot and we found domains that were associated with acquisitions that they weren't necessarily tracking that infrastructure wasn't pulled into their security team yet so they just weren't tracking it yet the things kind of take away from this is that neither approach is good enough to find all of it but if we kind of can take both approaches at the same time we'll have a better understanding of the total external attack surface our asset management of an organization so let's say that now

you've gone through the process you're convinced that you want to use the at the inside-out approach right how do you find all of the AWS accounts that you own we have some customers that have hundreds of AWS accounts and they need to track all those how do you find shadow IT within your organization and one technique is to follow the money right so talk to your finance group see if anybody has put credit card charges for AWS on their on their cards and try to track those people down and try to get access to to that data or visibility into their accounts one thing to know is that you know just because you're looking for AWS

Azure or like Google Cloud compute that's not necessarily all the different ways that shadow IT can come up there are additional you know SAS services that can also create AWS accounts for you or plug accounts for you that would show up on on that so just beware for that but about that so once you've collected information about all the accounts that you want to have access to how do you manage that and I think the answer is you create one more account which is the one that has accessed from like a read-only access auditor role to to get insight into all the accounts that you have identified so then yeah once even once you have the accounts

then the next questions are like what do you do with those accounts or what do you care about so you kind of boil it down add a few pieces of information once you have access to an account there's kind of different informations you can pull out of these cloud providers and so depending on your access level and what you can do and what services you're using it'll all change the kind of boils down to a couple different things so that you're internally facing stuff which should only be accessed internally but you can be misconfigured be PCs and other things so that that's not necessarily the case there's the internal side there's and also the internet side so some services

create like an s3 will create it essentially a link to the outside Internet where people can access it from the external and then there's kind of the in-between there's different services you can set up in between that will link the internal the external or just could be done and something we did look into is the a TMS resource relationships so there's something now called it I am analyzer which is something that looks at your infrastructure and determines that so it can help you determine if those internal things are publicly facing or any type of those misconfigurations are happening on the internal system yeah so some in some cases you can configure like AWS for example to give any other account within

AWS at all not within your organization just any AWS account access to your data that's not really what we're focusing on for this talk we're really focusing on from the external perspective internet facing systems so when we're looking at this the types of data that we're trying to collect here are associated with host names which is whether it be a DNS record or an IP address what ports are being exposed on that infrastructure what what protocol is being used to interact with it are there any relative paths that may be relevant when you're accessing it so in some cases like if you're doing a lambda and you're putting it behind an API gateway then it is a host name plus a

path in order to access that content so making sure that you have the kind of the read relevant contextual knowledge of how to interact with that system and one of the interesting things to note for cloud services in particular is that there's a lot of be hosting or like multi honing of these systems so do you just scan the internet for and IP on an IP basis you will not reach the correct back-end application that's serving your data in a lot of cases right you have an ec2 box then that has an IP address as a scientist to you but if you are using s3 for example you you won't really access it by IP address you won't get to this

list system that you're trying to to target so when we started this we were trying to answer that question right what systems are exposed to the Internet when when we were looking at this you know the the number of services that AWS passed in 2011 yet in 2011 it was like a handful right you could look at them you could count them very easily in 2017 that number kind of exploded that number just continued to go up every year they release new like several new services and it becomes harder and harder to keep track of which one of those services have publicly facing data and which one of those services have I expose you on

the Internet so some of the previous research that we looked into there was cloud mapper AWS public IPS which was linked from cloud mapper and access analyzer which we discussed a little bit earlier and cartography which was a tool by lift that kind of did a couple a little bit of everything some of the problems that we had with those is that it wasn't comprehensive it didn't have access to all the different endpoints just a very select few not all yeah so not all services were covered some of them had port information some of them didn't some of them had protocol information some of them didn't some of them focused on access control lists and none of them

did exactly what we needed so our first pass at this was going through the looking on the internet for what are the URL structures or the access patterns that you can use in order to get access to this data so first we went to the Guru api's guru and found some information that seems to be updated fairly often about what AWS services were available and in within their what the URLs were and we use that too perform some analysis using like passive DNS data to see what kind of data you could get access to there's some examples that we put together of how it is how the domains are structured this part based on time so here's some

example of exposed elastic search indexes that contain sensitive data that you can access only by hitting the correct hostname if you access these by IP address it doesn't work you can't scan for these with an with the old method of scanning IP on the right you need host names so making sure that you can find these on your environment helps you protect yourself same with another example was the media media store which allows you to watch videos or post videos the ready to videos we found patterns that allowed us to access channels that were supposed to be paid channels or part of the Samsung library of TV channels that we were able to access without owning a Samsung TV so

our current solution now is to create another tool producing small cloud right which is essentially a tool to help you identify and pull out all the host names IP addresses and and you our eyes that are publicly exposed on your AWS infrastructure and you yeah so big thing here is I'm kind of offended the same problem of which ones do we actually want to look at so we've looked at the example documentation with the AWS CLI I did some grep commands and try to understand what ones was returning datasets we wanted either domains or IPs and after enumerate again you end up with a much smaller list than the thousands or hundreds of different services that are available in AWS so at

that point you then have to take a deeper look so we essentially integrated those I think next slide shows that we ended up with 288 and across sixty two services that returned either hosting gi's or IPS from that we could see that there was ex-service --is so we started manually and going through each service and looking at these endpoints to identify which ones would disclose it and how so so far we've gone through 14 services like including like API gateway CloudFront s3 and wrote tooling that could be easily extendable in order to hold the correct information out of those services that would be publicly facing and interesting to look at from an external perspective yeah and

so there's a couple of tricky things right the 680 services our only ones that returned like fully formed host names you are eyes or IPs but there's also services that don't return that information so like example for an example s3 it will give you your bucket main but not the full URL so there's still additional combing that needs to be performed through the 194 services that are available but I think this is a good start we've created we've pushed the code to the repo and put some documentation there you can run it and see the outcome for you our eyes IPS and hostname on your external infrastructure or externally facing cloud and and past that you know we're working towards

getting a more and more comprehensive list to to wrap up our view of what your external attack surfaces when looking at an AWS account thank you for listening I know we had to go through the end faster to time anyone has any questions we'll be around to answer those yeah via the slack channel or any type of questioning system