BSidesATL 2020 - Detect: Automated Web Application & API Discovery & Other Things That Sound Simple

Show transcript [en]

it is it is my time to once again recognize our sponsors and we put up our slide deck here of our sponsors because without them as I've said multiple times throughout the day without our sponsors on board none of this would have been possible today so I want to take a few minutes to acknowledge our sponsors at our various levels diamond level Warner media at the gold level my home college the Kennesaw State University Michael J Cole's College of Business along with my home department the Cole's College Department of Information Systems in addition to them we have Bishop Fox coal fire genuine parts company and NCR and we are very thankful for all of their

support at the Gold level at the crystal level we have critical past security and synopsis and we are very grateful for their sponsorship at the at the crystal level at the Silver level a few sponsors here we've got I got a reading off list I'm getting tired sorry Erin's binary defense Black Hills Information Security Cora light and guide point security and we are very thankful for their sponsorship at the Silver level at the bronze level the NCC group thank you very much for your sponsorship at that level I also want to acknowledge some in-kind sponsors got a couple of them EC counsel provided a great training opportunity yesterday that some of you took advantage of and also we want to thank

and acknowledge secure code warriors our secure code warrior sorry they have been running a coding CTF all day long in a separate track and I've been kind of keeping an eye on that a little bit and they've been banging away at that stuff all day long and it looks like it's nip and tuck is that who's going to end up taking the top three places where they've got some type of prizes over there I'm not sure exactly what they are but they're giving some prizes away their next want to thank some individuals and organizations for contributing to our our giveaway process that we've been going through most today that Joette has been doing a wonderful job of over there in the

giveaway channel I want to acknowledge Mike Costa and crosshair information-technology want to acknowledge Jo gray we also want to acknowledge offensive security as well as the pen tester lab for all the things that they've contributed to us to be able to give away to you various points in time throughout the day this is a virtual global conference for the first time ever and as a result we are curious about where you are and so I'm going to stop sharing that for a minute and so if you haven't done so already please go drop a pin in the map that you can find at the URL that I'm about to place here in the channel because we want to know

where you are we know where you're coming from and what part of the world you're hanging out in lately so if you wouldn't mind drop a pin there and let us know where you are also I mentioned previously we've been giving away things all afternoon joette has been handling that over in this channel and I'm going to put that in here and just to remind you again that keep an eye on that there is a sign-up form that you need to put your information in on - to be eligible to win and for those of you who are privacy concerned I get it but if you want to get stuff sent to you we need to know

who you are and how to get ahold of you so we need you to punch in real information real mailing address real email address and a real cell phone number for any of that to happen also we've been having people ask us all day long hey I missed a talk where can I find it well we're recording all of them and we have a professional post-production process in place and once those videos those talks have been run through post-production we're going to make them available in our YouTube channel and so people have been asking where is the YouTube channel well it's right here for you I'm posting it in the channel we're asking that everybody go

out and subscribe to that because I found out a few minutes ago that once we hit 100 subscribers we get to have a fancy vanity URL so the closer you can help us get to 100 the better off we are and we appreciate that um let's see here I think that that's about all I've got so I'm gonna stop yapping grab my piece of paper and introduce our next two speakers we have with us Jeremy Brooks and Stuart Lane and they will be talking with us about automated web application and API discovery and other things that sounds simple but are actually difficult and with that I will turn it over to those two nice to them and I will stop

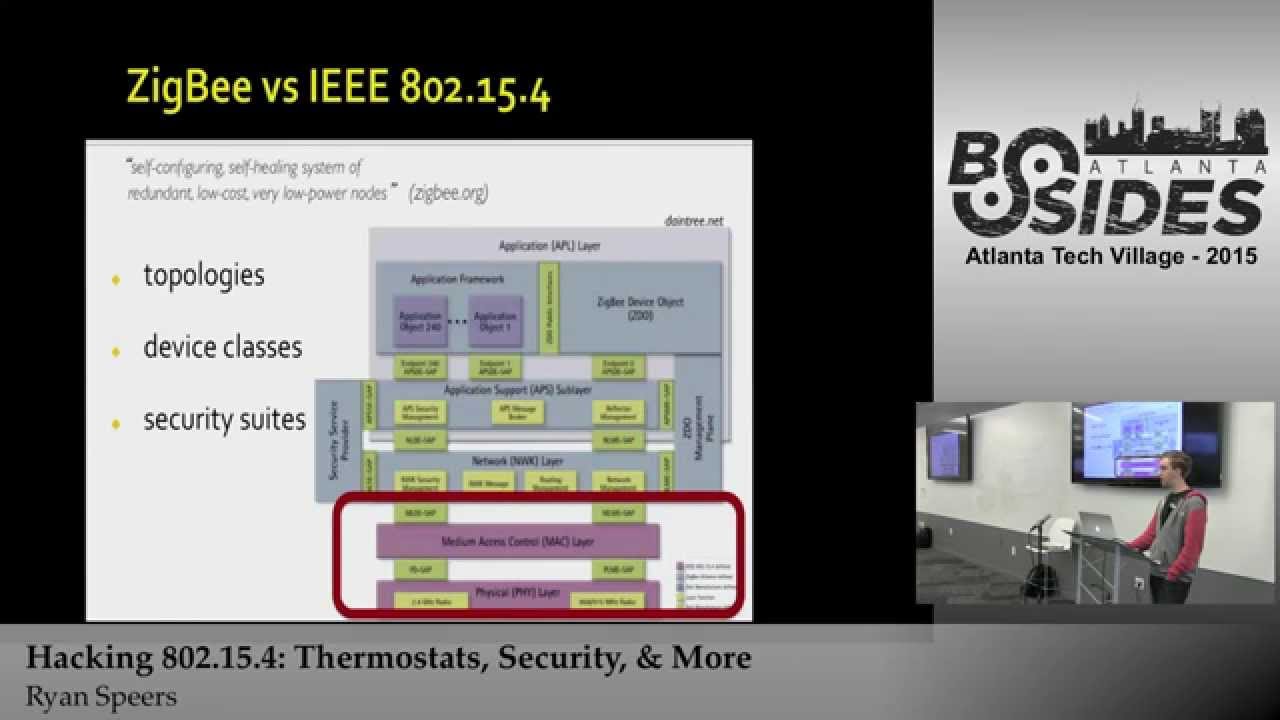

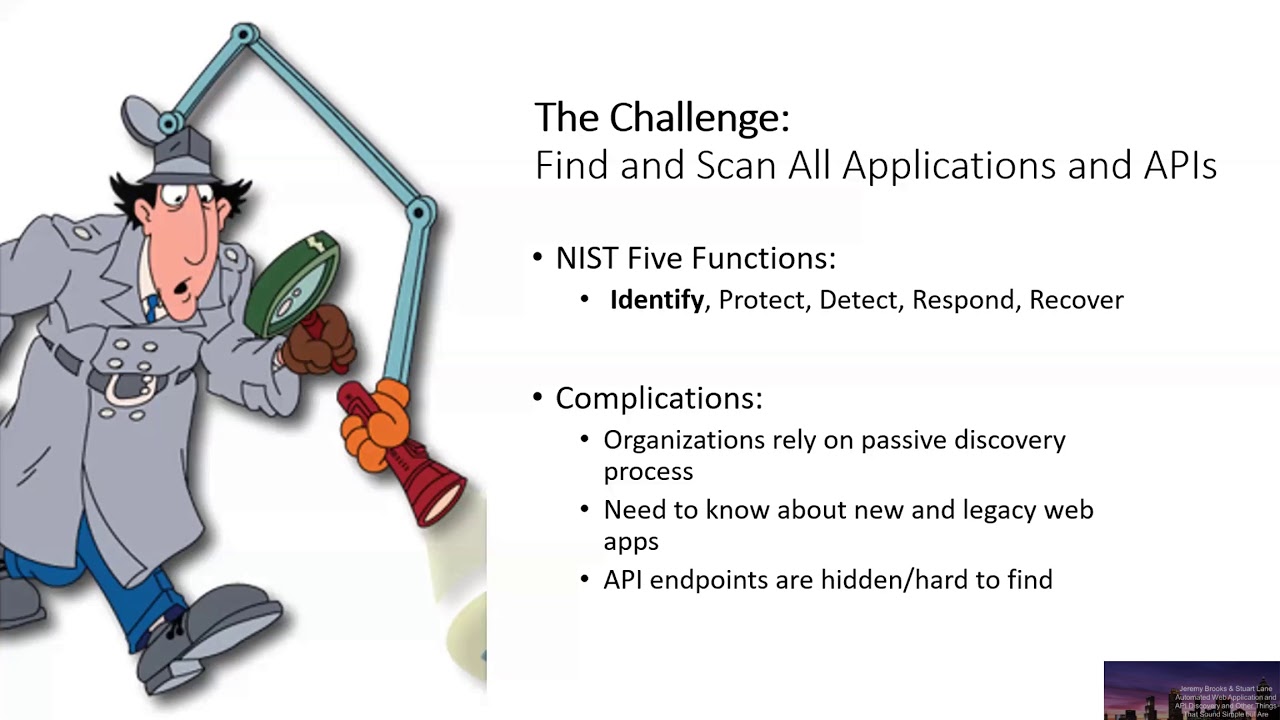

talking good afternoon everyone thanks for spending some time hanging out with us my name is Jeremy Brooks I'm a developer for the last 20 years that sort of switched over net to app sec and focused on app sec for probably the last five plus years working on different things now I'm running a application security program at a Fortune 1000 retailer here today with co-worker mine Stuart let him do is everyone yep I'm Stuart Lane I'm an application security engineer I work with Jeremy I'm also a student at Kennesaw State University majoring in information security insurance and graduating this may so let's do well yeah go else so we get right into it so the challenge that we're presenting is

to find and scan every application and API in your organization NIST has developed five functions which constitutes a strong cybersecurity program and a lot of people focus on the protect part but you can only protect your applications if you know where they are so we focused our efforts on identify which enables us to understand what type of risk is possible within specific environment in our organization basically if you don't know about it you can't assign a risk to it so we're not going to talk about how to set up best incest that's for another talk we're specifically talking about before you go into that stuff how do you find those applications and the challenge with this is that absolute

teams don't have a great discovery process or it's a it's a passive process there are new there are new apps and Lacey web apps that maybe no one knows about in M API endpoints they propose a completely different challenge because they're difficult to find Beryl it's like go find all your web apps well okay but how are we going to do that and that's where teams usually fall back into an old-school onboarding model so here's what a typical old onboarding process looks like that a knapsack team may have Smee set up this is a prime example of what a waterfall method is in dev teams just don't do waterfall anymore if you try to implement this in

an agile development model it would be perceived as a gate and developers won't follow it and figure out a way to bypass this process leaving you wondering why no one is filling out the four they created and even if you can get a dev team to do this the data that given to you only works really if it's new development and that information is also becomes out-of-date as soon as it's handed off to you so our approach basically how do you you know you still want to be involved with the dev team so you want to know what they're working on and security is still important even in an agile development process this requires a mind shift and an

understanding of how new software flows on the dev development teams and you have to have some idea of how to hook into those different points into the pipeline so we're going from a passive to an active search for applications and then based off the end that's bill we identify risk factors and that's specific to our organization that we're going to talk about later on and based off of the risk factors in the inventory we track changes and we create alerts based off of those changes yep that's great so before we dive into the methodology will kind of give you our thought process as we were building this this process out and how we sort of

thought about how we're gonna tackle this problem and basically we went back to their steel sea and we thought about you know what what does it look like in the environment when news when software is being built and being tested and being deployed what what's going on what kind of activity so there's repositories being created there's commits flow into repositories if it's you know if they're actually doing an agile or lean you know there should be a CI CD pipeline so there should be projects being created there should be DNS entries for these web apps right I mean they they're gonna have names and URLs that people can visit so there should be DNS records

there should be infrastructure being set up and if they have a proper pipeline they're probably going to have a dev environment a QA testing environment and then a production environment so our thought process was we should go look for signs of life you know we should go look at sources of truth to figure out where software is and that's kind of the foundational thought behind our behind our pipeline so this is what our pipeline actually looks like at a very high level we're looking at we're doing DNS enumeration so we're collecting lots of host names that may be running web applications may not we're doing web host discovery on those we're doing API discovery which is basically going a

little bit further it's web host discovery is kind of just checking see if there's something at common web ports and does it look webby right does it speak HTTP API discovery is looking for REST API then we're doing risk factor identification and what we're trying to determine there is do we really care about this application beyond just knowing that it exists you know we're gonna necessarily scan everything your new full penetration test we we need to look and make a determination on how deep do we need to go and then we're generating alerts on all this stuff so we're not having to manually go and constantly look for these things we're getting feedback from the system so we'll look at each piece

of this the DNS enumeration it's pretty basic but we're looking at sources of truth right so the first place to look is to look at your DNS servers and look at the zones so we have a system that is trusted by the master DNS server and it can do zone transfers and that gets us a lot of Records back and we're specifically looking at a records quality records and see names and using those to build fully qualified domain names if you're using Azure DNS or AWS is route 53 you can enumerate DNS through their REST API we're actually using Azure DNS so we just go through all the zones using the azure DNS zone API and then we pull all the records and

we look for those a records and cname records so that gets us the big bulk of the host names in addition to that we also run some make some tools that look at external data sources so a mass and find domain or the two specific tools we use all the tools I'm going to talk about by the way or open source these tools give us view of shadow IT so don't know if you saw the Bishop Fox presentation they kind of touched on this and we're doing something kind of similar because you might have found all your DNS records but there could be some application out there or some app that somebody stood up that's still linked to your domain and

this is a good these two tools are a great way to find out where those are and Adam to your inventory so once we've built that list at this point all we have are host names we don't know what's running on those we need really in our cinema web applications so we need to go look for the ones that looked webby so you could use nmap or curl or roll your own thing that goes out and hits various ports I came across a tool called HTT probe which is a little go program it's it's pretty fast and it really gets the job done so it's very nice you just feed it a list of host names and it spits out

a list of web hosts with the port and scheme attached perfect that's exactly what we need the other thing that you can do so if you're not having good luck with with doing like the convincing your sis admins to allow your server to do zone transfers or you're not figuring out where to get the axe you know you're not getting access to your cloud-based DNS system look at your continuous deployment tools because those have to push that application out somewhere and so in the configuration there's going to be a host name of where that application lives so that could be a good place to do we're not at the point of where you feel like we need to do that data mining

but it's another place to look so at this point now we've kind of whittled it down to we've got a big list of web hosts where we suspect web applications are running we would also like to know within those applications where are our api's so most developers if they're building new api's they're probably not using soap so it was kind of cool back in the day because you can trust that there would be a wisdom endpoint which pretty much described every every endpoint for the API so you're once you found the wisdom of Don rest is a little more complicated because it's it's kind of wild wild west there is a new relatively new it's been

out for a few years now but fairly widely adopted let's just call it the de facto standard for REST API I'm it started life is swagger some people still client swagger it was rebranded a couple years ago is open API but you'll still use see those terms kind of intermixed if you're not familiar with it you can go check out the swagger to i/o site but it's basically a way to describe your REST API in a machine readable way so it'll be either a JSON object or a Gamal object and that's what we're looking for so our strategy is to take the list of web hosts that we found and go visit some of the common endpoints where we

know our developers push out api's and we look for a JSON or gamal document on the other end of that that's that's a pretty good way to do it it's not bulletproof but if somebody has a better way I'd like to hear it but that works well in our environment because we know something so you have to know a little bit about how your developers build their api's you might have a different list of common locations and one point I don't think we made clear is none of this changes your relationship with the developers by the way you still need to have good communication but this lets you go out and and fish instead of

sitting by waiting for developers to come to you another good place to look if you use in Azure xapi eye Management Services or AWS API gateway again you can just go data-mine that and you can just enumerate all of the api's and when developers configure their api's 300 ease tools through as your AWS you basically get open API doc for free you just need to go and find all the find all the resources and go visit the end point that gives you the open API doc and the other cool thing about swagger or open API where is a lot of tools support it so postman you can just drop an open API doc in the postman

and it generates a test suite for you or there's a plugin for burp there's a plugin for his app a lot of commercial scanners have built-in support where you can just feed them document alright and then finally risk factor identification so these are the high level risk factors we're looking for these kind of give us a quick view of how deep we want to go again you might have different risk factors but these are the ones that we kind of agreed on internally that are signals of that we need to go deeper so is that our login page is it using is it configured to use weak TLS which understand that's not an application issue but we found that applications

that are running on a server configured with weak TLS tend to have other problems so it's a signal for us if it supports HTTP with a width and it doesn't redirect to HTTPS so in other words I can visit HCP website HTTPS website and basically at the same content that's a signal that there's a that there's some risk they're outdated or old sites again with this enumeration we're probably going to find some legacy stuff that's not part of the pipeline that nobody's looking at so we want to be able to identify those kind of legacy older sites and then risky keywords so that's what that's about is going into the looking at the response or looking

at you're get repositories and looking for words that you might be specific to your environment so for our environment we're really concerned with applications that touch payments or applications that deal with sensitive PII so we're looking for applications that have keywords that seem to indicate that they're doing this and then the last one which we haven't fully cracked yet although I'm super interested to look deeper into the smog cloud which Bishop Fox talked about this morning is is it an external versus internal we sort of intuitive based on where we discovered it if we find it through like a mass or find domain we kind of put it in the external bucket if we find it through one of our internal

DNS servers we put it in an internal that's not always correct there because we have some things that are internal that still somehow leaked external but it's still a pretty good a pretty good indicator so kind of touched on this stuff but I baller is is good for finding login pages or old-looking sites so this is a Bishop Fox tool that they published back in the fall really easy to use just take just use Chrome headless feed it your lit your your list of web hosts generate the screenshots feed in iBomber and then let eyeball or do the classification for you so they've got like four different classifiers right now we're just looking at login

pages and old-looking sites if you're familiar with SSL labs which is hosted by paws you might like to look at test SSL dot sh it's open source free version of that that you can run on your own hosts you don't have to worry about you know getting API throttled or you can you can tune it it really is a Swiss Army knife of TLS checks we kind of we do the the fast version that just looks for critical issues and we report those as risk factors and repository activity cells talking about risky keywords that's what we're looking for those new repositories we actually alert on that because if somebody creates a new repository usually the name of the repository has a

good clue of what it does and if nothing else it's a chance to go engage with the developers and say hey we noticed you created a new repo called do super sensitive thing with customer data let's talk about it and you can also if you're using an on-prem version you can use a tool like talisman or get secrets and look for hard-coded secrets we're not there yet reason bitbucket cloud which doesn't have hooks so we're actually trying to build something that basically monitors for changes so we can't do the blocking but we can do the reporting but if you've got an on-prem solution for repositories you can use one of these so that's that's our full pipeline I see

the questions piling up in slack so I'll get to those in just a second what I wanted to talk to you about a little bit was outcomes so we we've been able to successfully implement a lot of this pipeline and so we've we've moved past the passive discovery now we go out and find stuff we stop developers come to us we still have open lines of communication but we're just as likely to find something new that that they haven't told us about as we are to have them come by so it gives us a chance to start the conversation the really big benefit of this is we have a basically continuously up-to-date application inventory we can have you

know we're just constantly building this this thing runs all the time it basically runs and when it's done it just starts up again so it's it just never ends we're able to use those identifiers that I've pointed out as as risk factors so we attach that to the finding and we can say it kind of gives us a quick and on on whether we need to go deeper into the site and we're sending all these alerts to a slack channel that we monitor and that our developers can monitor - so we're getting good visibility on all these things and I'll give you a little teaser so we built this out with some of those up to source

tools that talked about but we also wrote some proprietary code because this is something that you know we built for our team at work it's it's proprietary to our employer so I can't just post this stuff up to github and say have at it but I'll show you real quick how we built it and I'll give you some better news right after this so it's just an example of one of the slack alerts so here we you know two new API is discovered in Azure two new live web hosts were discovered and some new bitbucket repositories and we can go look at each one of those and see you know what the what they look like what

the risk factors were we built it out as a REST API so we have our own swagger page and we have just a cron job basically that just runs it on repeat so like I said the bad news is I can't just take this and post it but what I'm doing right now is I'm gonna build out and it just starting like this is literally fresh as of this week and I published it up to my github so there is a app set discovery repo in my github and you're welcome to go check it out like I said it's really raw but I do have I have implemented some a significant part of the pipeline and you can if nothing else

use it as a example of how to use some of those tools but I'm gonna continue building it out it'd be awesome if you want to collaborate on it totally down with that if you just want to pull it down and maybe try to use it to bootstrap something in your organization that'd be awesome too but that wraps it up but Stuart did you have anything else I kind of yes no yeah I mean you know better risk factor identification the the goal you know is to its decide if the site's working worth taking people look at right so in our organization we have over two thousand applications so based on a risk factors we might not we might

engage and we might not but it's good to know it's good to know that these applications exist in general so I mean that's really what we're looking at there but I know it was great I think that it was good Jeremy good all right let has any questions we can insert yeah there's some good questions all right so here's one it says how would you do API discovery in an environment where documentation is outdated or not even done at all so yeah I mean that this is a good model for that it really what you need to do is look for pattern so even if you don't know where everything is is if you know where some stuff is you

probably have a pattern that you could follow so if you're developers aren't sticking their API is under you know a slash API endpoint they're probably doing something similar if nothing else you can start looking through source code repositories and look for those patterns and then build those out into your automation unfortunately there's not you know there's no magic I mean api's are tough and it's just because they're not well especially REST API is because discoverability is a very tricky with them there's there's there's nothing to crawl right it's not like a standard website where you pointed at the root page and you get back links and forms and stuff that you can then start crawling if you go visit the root page

of an API you're just as likely to get a blank pages to anything else but if they're using some standards like swagger or open API you can look for those swagger Doc's or open API Doc's and use that as your documentation if and then you said for web host discovery would you recommend a network perimeter scan for applications communicating through firewalls b2b or b2c yeah that would be a cool that would actually be a really cool feature I think you're talking about like looking at your firewall logs and seeing basically you won't you want to build your own caste be kind of thing right you could do that I think you'd have to figure out you know if you use and it's

gonna depend on what kind of firewall using but you know like powers I know they have blogs and you can pull those out if as long as it's like an enterprise firewall there's probably an API and there's probably logs that you can pull out and and data mine those for for b2b and b2c communication ok cool so it looks like we're getting the hook yeah I'll answer the rest of the questions in the slack Channel thanks guys I will post the slides and thanks for your attention and have a great rest of the conference